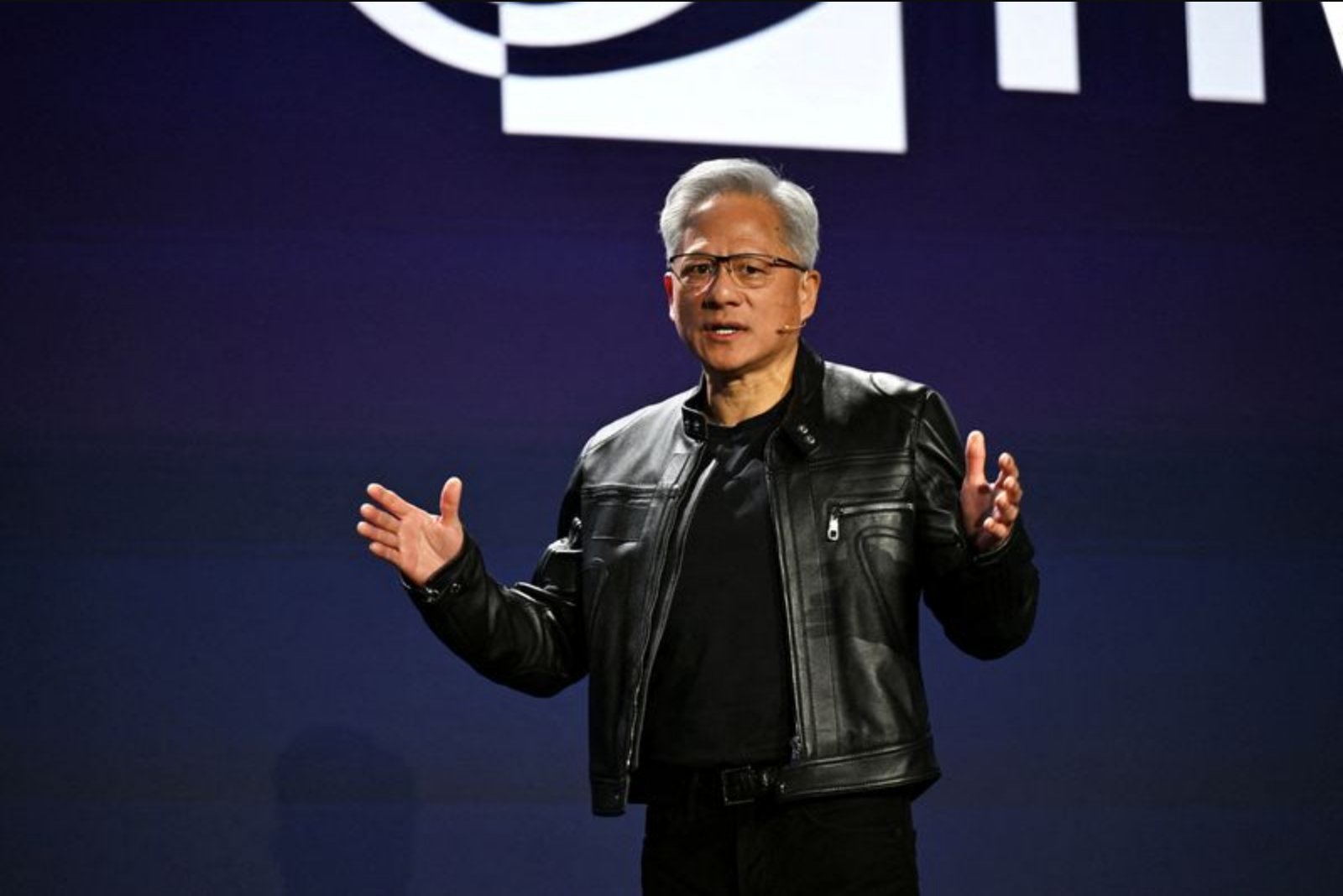

Jensen Huang, chief executive of Nvidia, is slated to present the company’s software and hardware plans at the firm’s annual developer conference in San Jose, California. The four-day GTC event opens with Huang’s keynote at a hockey arena that can seat more than 18,000 people, where he will outline how Nvidia intends to respond to a rapidly evolving artificial intelligence landscape.

Investors and industry watchers expect Huang to provide detail on a next-generation AI chip referred to as Feynman, a name that references American physicist Richard Feynman. Nvidia, which holds a market capitalization of more than $4.3 trillion, will likely use the conference platform to explain how Feynman fits into its broader strategy across training and inference workloads.

Topics Hewlett-Packarding through the event are expected to include data center deployments, Nvidia’s chip programming environment CUDA, digital assistants commonly described as AI agents, and applications of physical AI such as robots. The company is also expected to address technology tied to Groq, a chip start-up from which Nvidia licensed technology in December in a deal valued at $17 billion.

Groq’s specialization is fast and low-cost "inference" computing, a category of work in which a trained AI model applies what it has learned to answer questions or produce predictions in real time. That work is distinct from the heavy-weight training workloads for which companies have recently spent large sums on specialized chips.

Companies including OpenAI, Anthropic and Meta Platforms have invested hundreds of billions of dollars in recent years purchasing chips to train their AI models. Those companies are now shifting toward serving hundreds of millions of users who will tap those models in production, a transition that places growing emphasis on inference performance and cost.

Analysts note that Nvidia faces comparatively stronger competition in the market for inference chips than it does for chips used in AI training. Rivals and even some of Nvidia’s own customers designing proprietary chips are challenging the company’s market position, and analysts expect Nvidia to use GTC to shore up defenses and clarify its product roadmap in response.

Despite heightened competition, Nvidia remains central to the wider AI ecosystem. Nations such as Saudi Arabia are reported to be building bespoke AI systems for their populations using Nvidia chips. The company is also among the few large U.S. firms that continue to publish open-source AI software, a practice that has strategic implications amid growing global competition.

Huang’s keynote is set for 11 a.m. Pacific Time (2 p.m. Eastern Time/1800 GMT). Questions about Nvidia’s valuation are circulating among investors: a Fair Value calculator that combines a mix of 17 industry valuation models is one way to estimate whether NVDA is attractively priced, and such tools can deliver comparative metrics across thousands of stocks.